Open-World Visual Understanding

Understanding actions, objects, scenes, and interactions when categories, contexts, temporal structure, and goals are not fixed in advance.

Ph.D. Student · Computer Vision · Multimodal Learning

I study how visual AI can understand people, actions, objects, and environments in open, changing worlds. My research connects computer vision, multimodal perception, and embodied intelligence, with a long-term interest in models that reason, generalize, and learn beyond closed datasets.

I am a Ph.D. student in the CV:HCI Lab at Karlsruhe Institute of Technology, supervised by Prof. Rainer Stiefelhagen. Since April 2024, my work has spanned fine-grained action and scene understanding, human-object interaction, open-set and domain-generalized learning, noisy-label learning, and benchmarks for industrial, wearable, and microgravity settings. My publications include work at ECCV, NeurIPS, IROS, ICLR, ICRA, and IJCV, together with public datasets, benchmarks, and code. I am broadly open to research collaborations, student projects, and thesis supervision across computer vision and AI, including directions beyond my current publication topics.

Updates

Research Agenda

Understanding actions, objects, scenes, and interactions when categories, contexts, temporal structure, and goals are not fixed in advance.

Connecting video, language, spatial cues, and human context so visual models can reason about activity, affordance, and future actions in the physical world.

Training and evaluating models under noisy labels, scarce annotations, domain shifts, uncertain evidence, and deployment pressure.

Selected Work

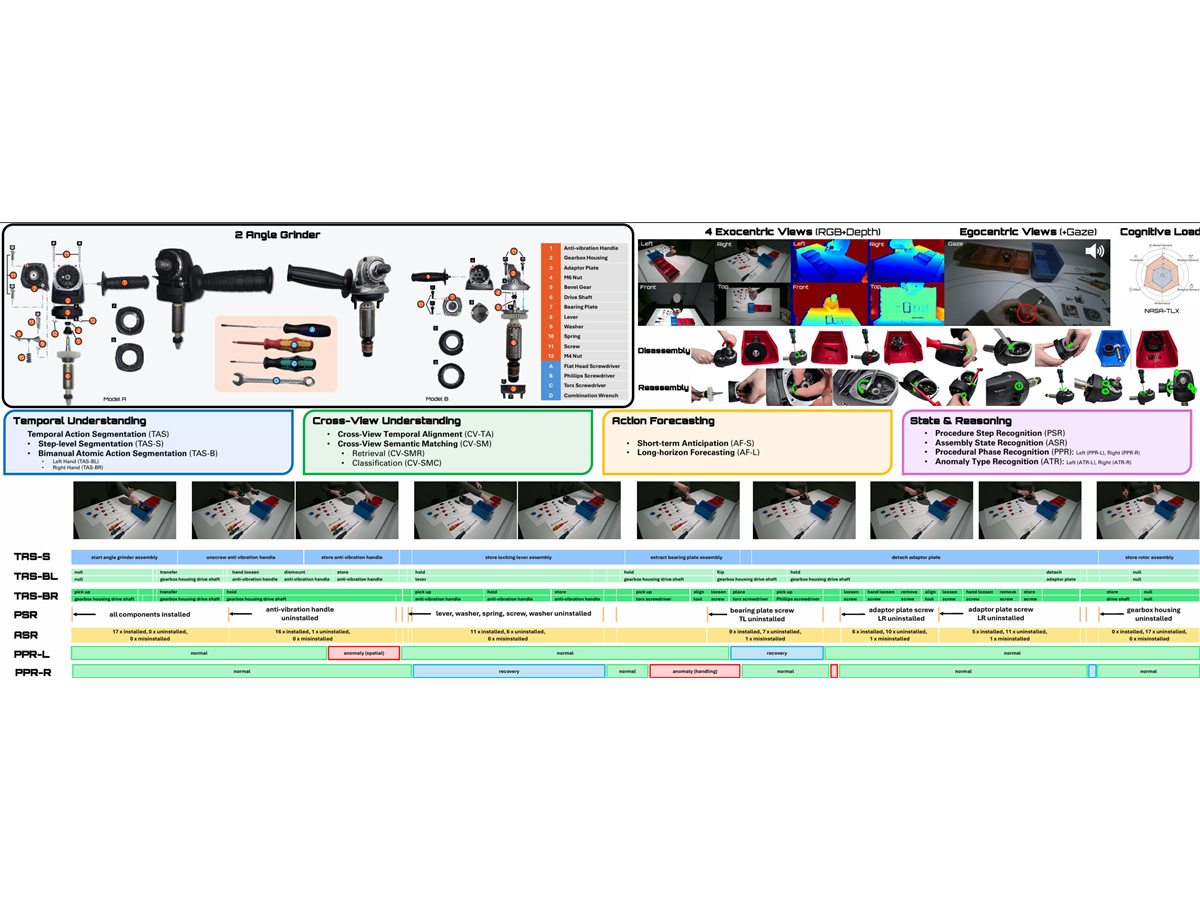

arXiv 2026

A multi-granularity industrial assembly dataset for procedural action understanding across videos, annotations, and benchmark tasks.

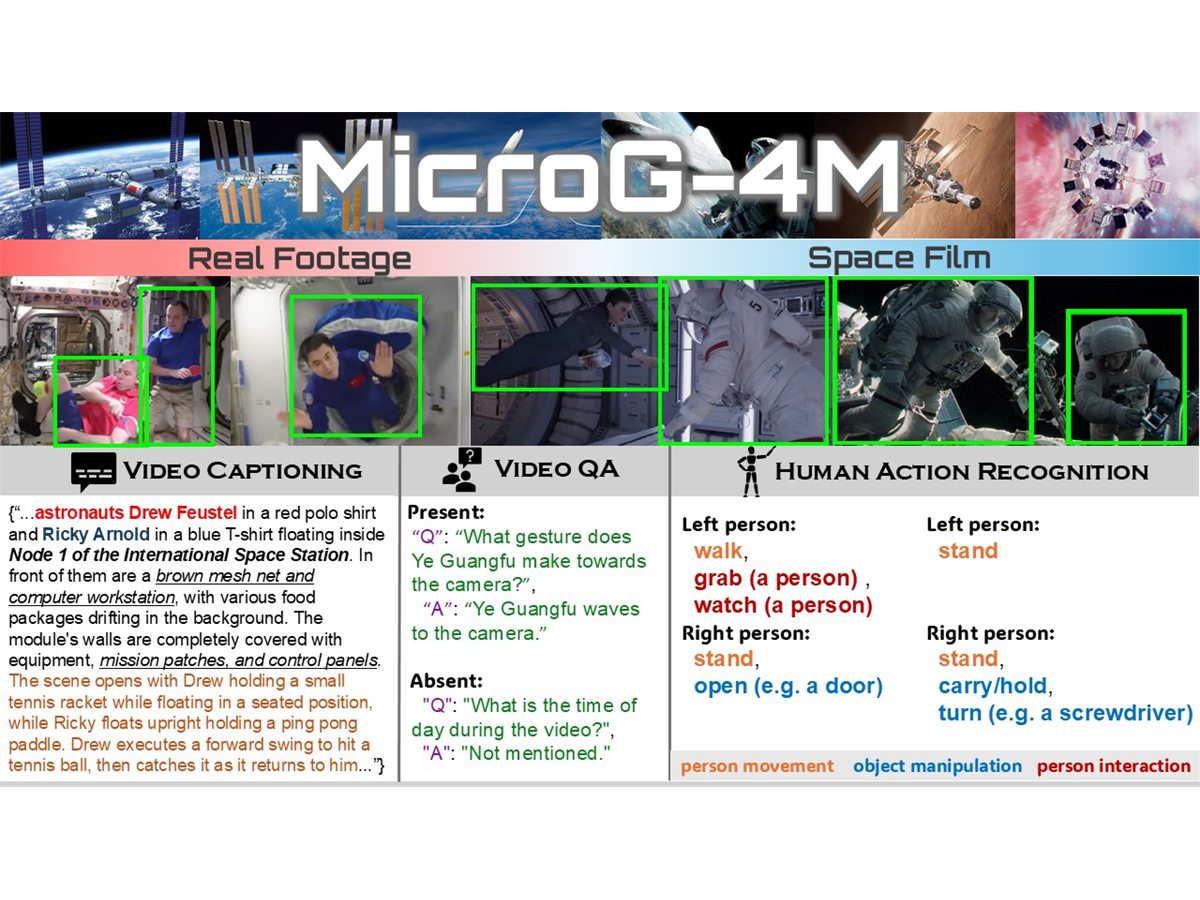

ICLR 2026

A benchmark direction for human action and scene understanding in microgravity environments.

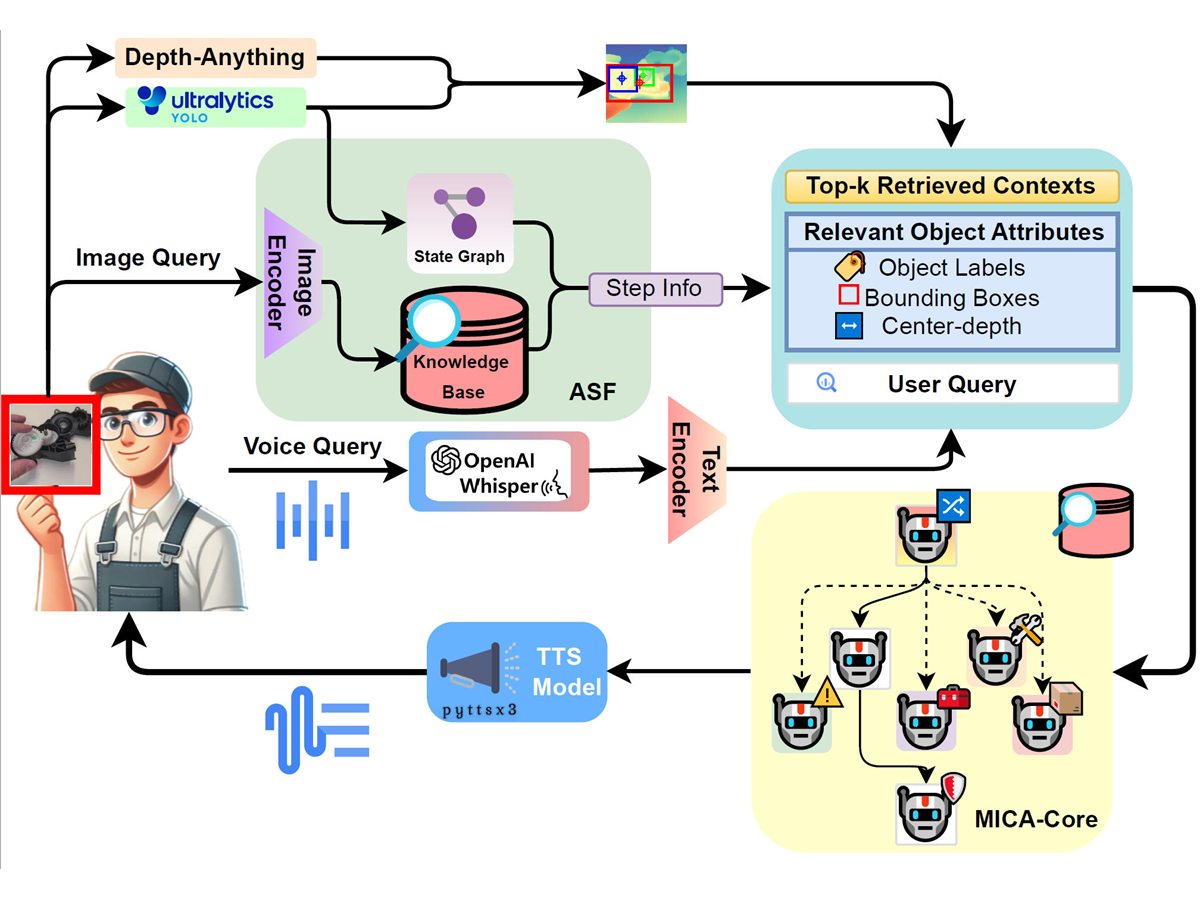

ICRA 2026

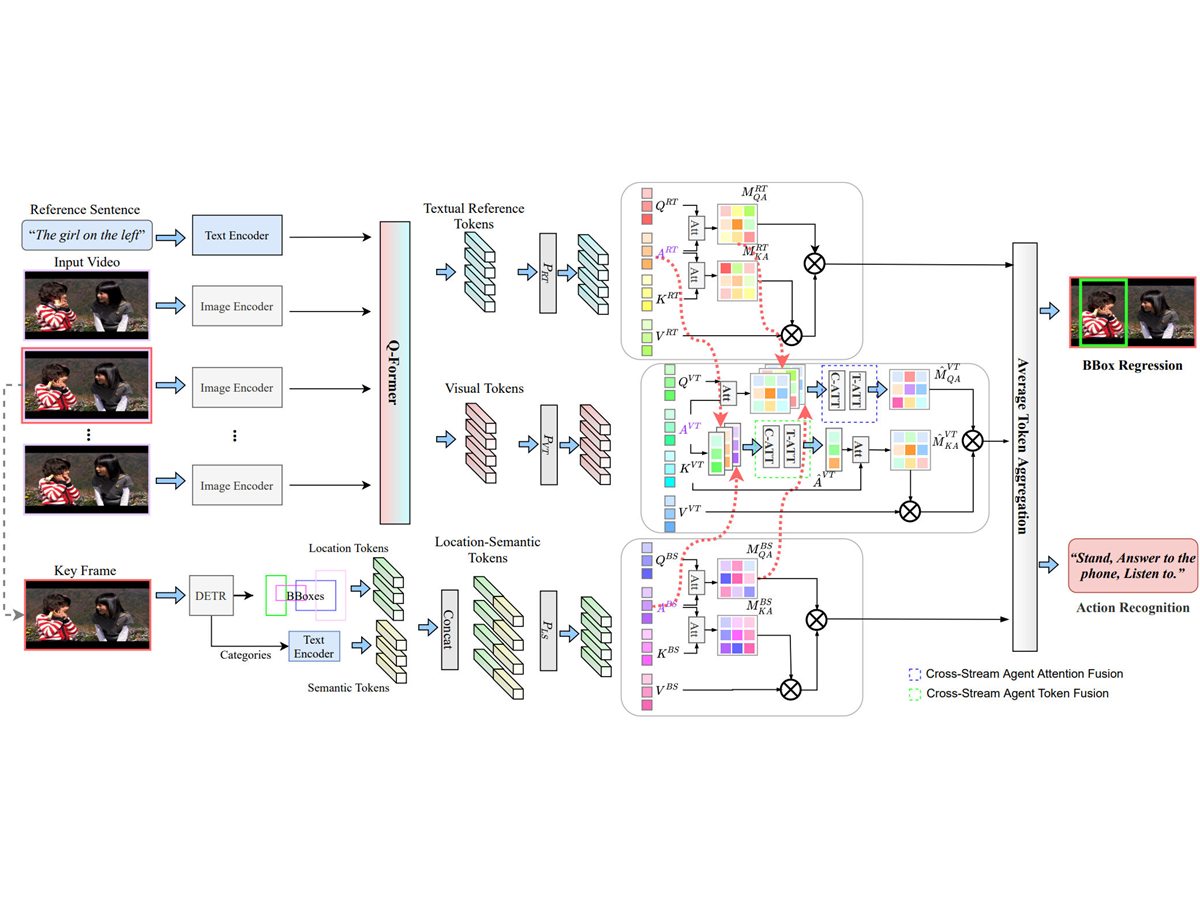

A multi-agent assistant for industrial coordination, combining perception, recognition, and interactive reasoning.

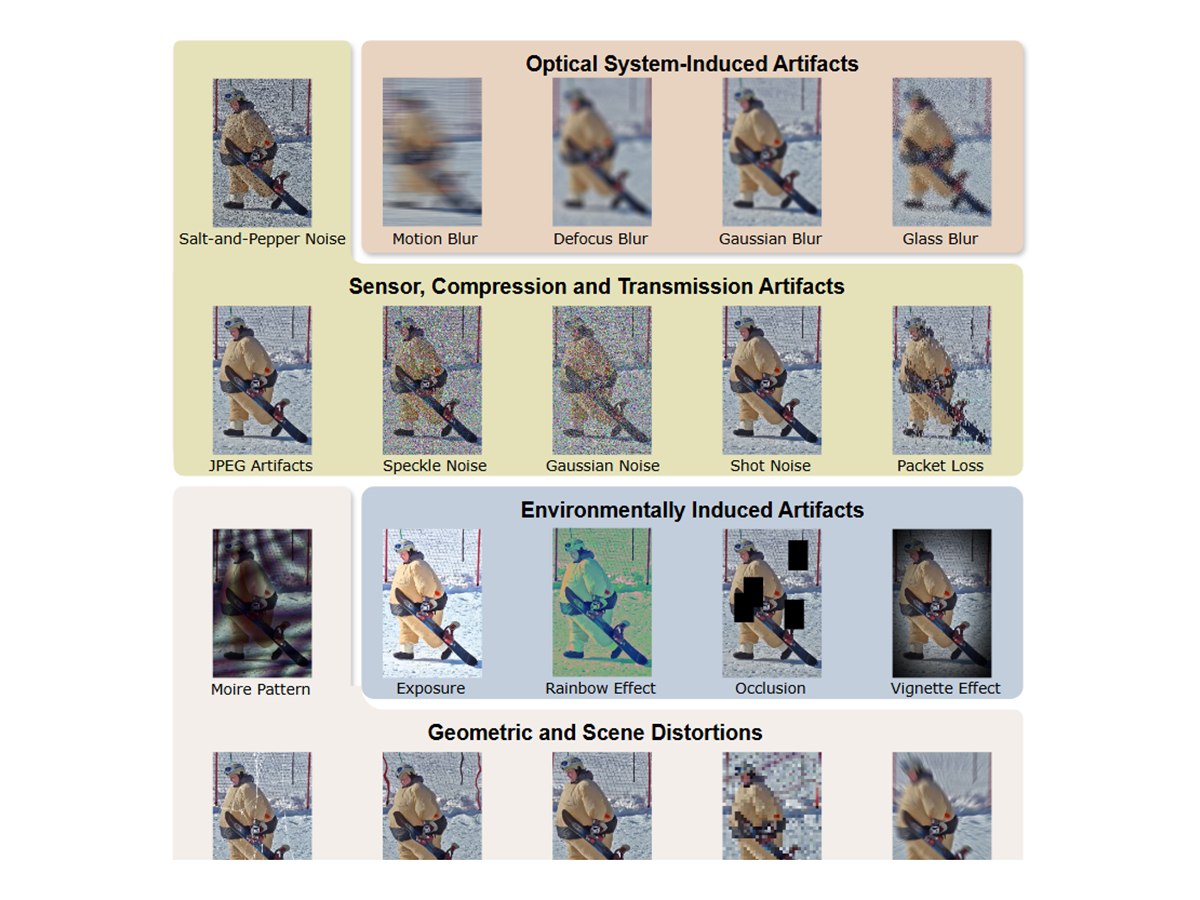

arXiv 2025

A robustness benchmark for evaluating human-object interaction detection under realistic shifts.

SMC 2025

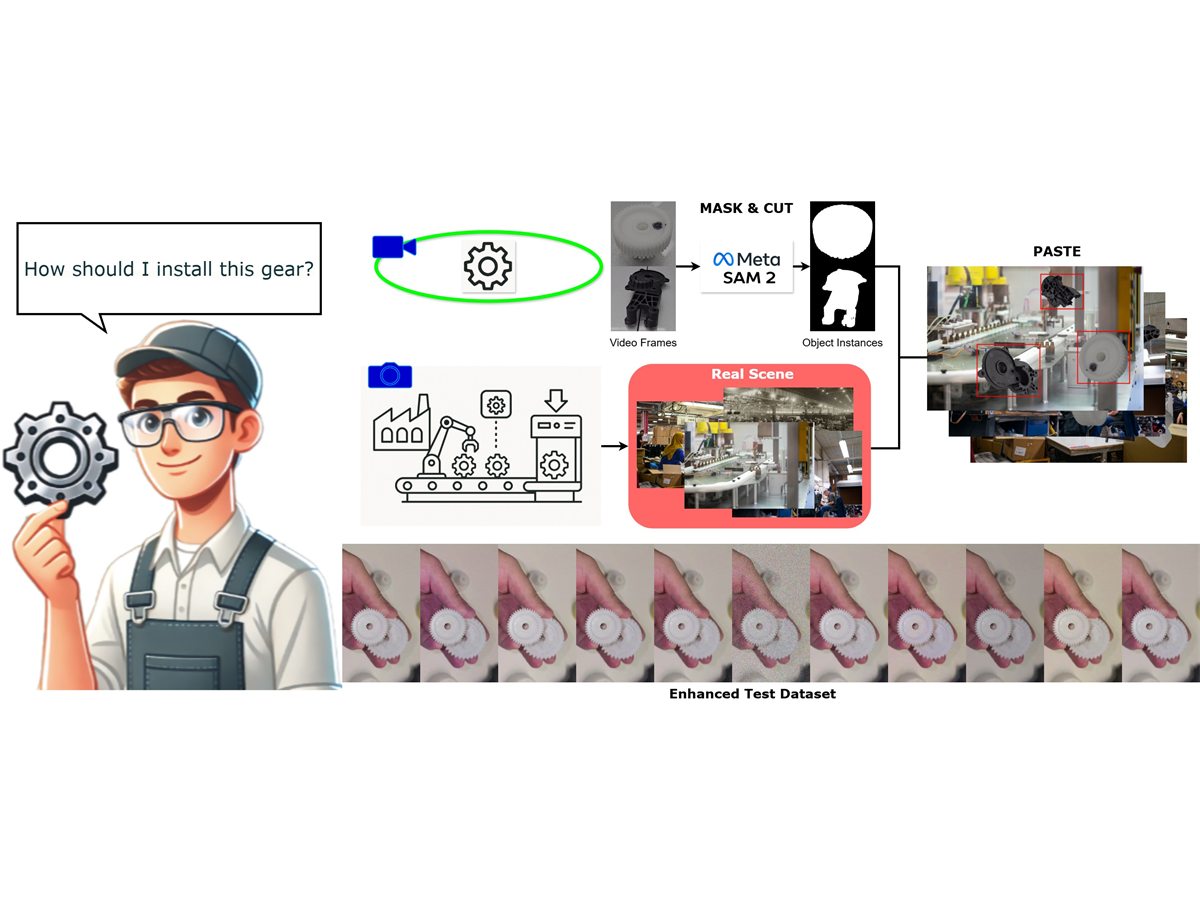

A wearable industrial assistant pipeline for visual data capture, segmentation, and deployment.

ECCV 2024

A fine-grained video action recognition task centered on referring to atomic actions in video.

Public Releases

A curated set of public datasets, benchmarks, and code releases connected to papers. For the complete publication record, please see the publication list or Google Scholar.

Public Dataset

Multi-view procedural action understanding dataset for industrial assembly.

Robustness Benchmark

Robustness benchmark for human-object interaction detection.

Research Code

Multi-agent industrial coordination assistant for recognition and interactive reasoning.

Research Pipeline

Visual data and detection pipeline for wearable industrial assistants.

Contact

I welcome research conversations, collaborations, student projects, and thesis supervision across computer vision and AI. I am especially interested in ideas that connect perception with reasoning, multimodal learning, embodied intelligence, scientific or industrial applications, and rigorous evaluation, and I am happy to discuss directions beyond the topics listed here.